How we analyze winning Meta & TikTok ads in 90 seconds

Why we threw out the 5-step frame-extraction pipeline and now feed the raw video to a multimodal model.

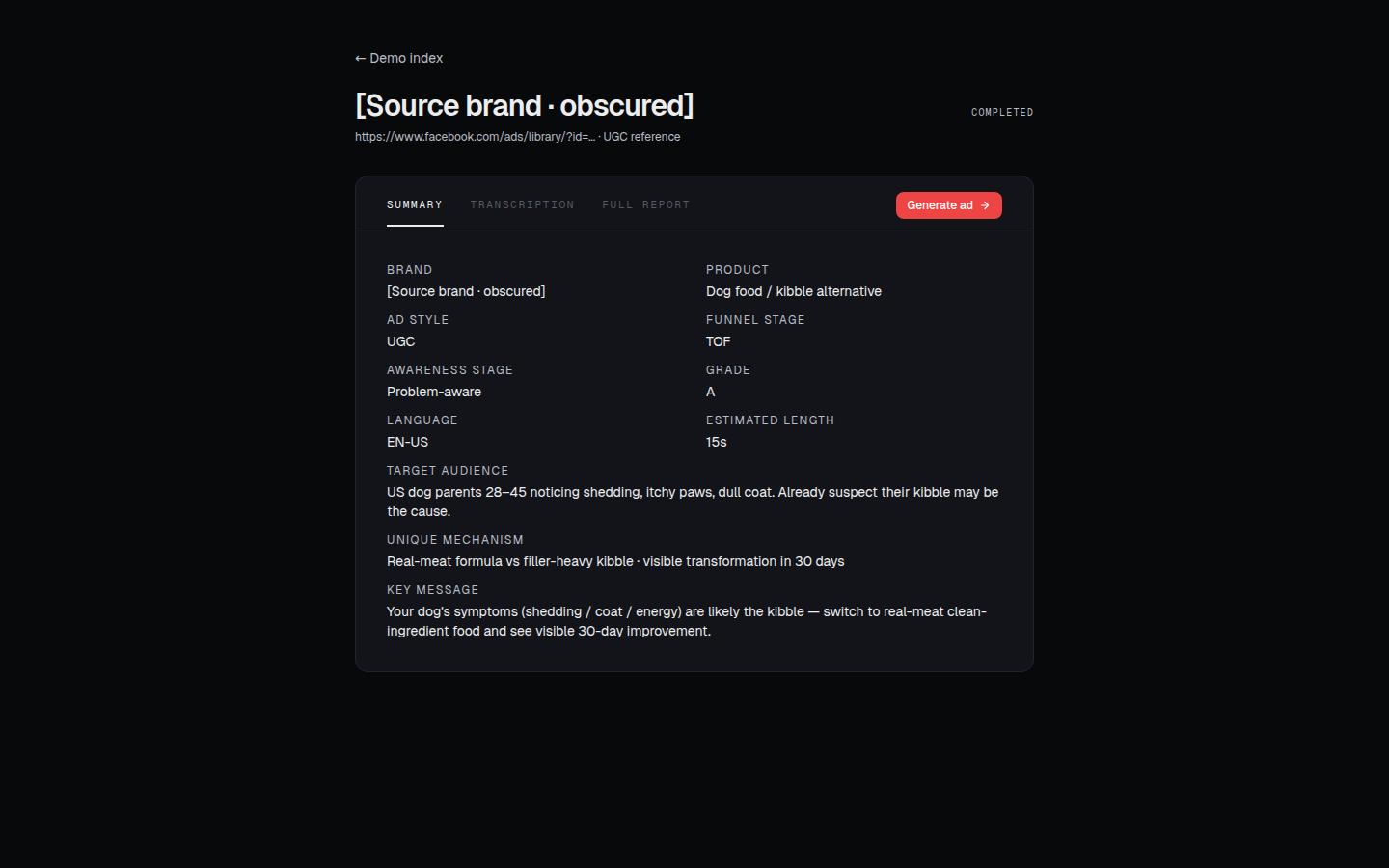

Ninety seconds. That's how long it takes us to turn a Meta or TikTok ad URL into a structured analysis report — hook strength, emotional arc, CTA mechanics, creative grade, the whole reverse-engineering of why the ad works. The first version of that pipeline took five minutes, cost ten times more, and was still worse. Here's what changed.

What "analyzing a winning ad" actually means

If you've ever tried to learn from a competitor's UGC ad, the workflow is depressingly familiar. You watch it once. You pause and rewatch the hook. You note the line that made you stop scrolling. You squint at the b-roll for the product shot. You try to articulate why the reaction is natural and not stilted. Twenty minutes later you have a Notion page that's mostly vibes.

Multiply that by the ten ads in your competitor's library. Multiply again by every category you're testing. The work is real, the output is mush, and after all of it you still don't know which beats matter and which were happy accidents.

We wanted a tool that does what a senior creative strategist does — but in 90 seconds, on every ad, every time. Same rubric. Same depth. No "I was tired, I'll just skim this one."

The first pipeline (and why we threw it out)

The obvious way to build this — the way every example tutorial on the internet does it — is to break the video apart and analyze the pieces.

So our first cut looked like this:

- Pull the mp4 from the ad URL

- Extract every keyframe with

ffmpeg - Run each frame through a vision model for descriptions

- Transcribe the audio with Whisper

- Hand the text + frame descriptions to GPT for synthesis

It worked. It was also a mess.

Frames lose the thing that makes the ad work. A reaction shot is funny because of the cut into it — the timing, the audio sting, the half-beat of silence. Hand it to a vision model as a still and you get "young woman, surprised expression, kitchen background." All the edit grammar — the rhythm, the cadence, the performance — vaporizes the moment you turn 24 frames per second into 12 thumbnails per minute.

Five steps means five places to fail. The frame extractor would silently mis-grade an ad's pacing. Whisper would mishear product names in noisy hooks. The synthesis prompt would have to forgive both, and ended up writing reports that hedged everything. Every layer of stitching was a layer of plausibility loss.

It was also slow and expensive. End-to-end ~5 minutes per ad. Vision tokens for 30+ frames per video. Whisper compute. A long synthesis prompt because the model needed everything spelled out as text. You can run that occasionally; you can't run it on every ad in someone's competitor library.

So we built it, ran a few hundred ads through it, watched the report quality plateau at "fine," and started looking for the version that didn't pretend a video was a slideshow.

Feeding the video directly to the model

The shift was small to describe and big to ship: skip the disassembly. Send the mp4 to a multimodal model that natively understands video — one model call, one context window, one piece of grounded reasoning over the whole 30-second creative.

Today we send the mp4 directly to a frontier multimodal model that understands video natively. The model sees frames, hears audio, reads on-screen text, and follows the cut rhythm — all in one pass. No frame extraction. No transcription step. No frame-description tokens. The synthesis model and the perception model are the same model, which means nothing gets lost in translation between them.

The diff in output quality was not subtle. Hook descriptions stopped being "she says X, then she says Y" and started being "the hook lands on a half-beat pause before the product reveal — the line itself is plain, but the cut sells it." That's the difference between describing a joke and getting it.

What the analysis report actually contains

A finished report is about 800–1200 words of structured copy plus a handful of grades. It walks the ad from cold open to CTA in roughly the order a viewer experiences it, and it scores each beat on the dimensions a creative strategist would care about:

- Hook mechanics — what makes the first 1.5 seconds stop the scroll. Pattern interrupt? Curiosity gap? Direct callout? With a critique of how cleanly it lands.

- Emotional arc — where the ad moves the viewer, and how. Not vibes; specific lines, specific cuts.

- Product reveal — when the product first enters the frame, how naturally it's introduced, how the demonstration is staged.

- Proof / authority signals — testimonials, data, before/after, social proof. Which signals are doing real work vs. which are decoration.

- CTA effectiveness — the call-to-action's specificity, urgency, and friction-removal.

- Replication notes — what's transferable to a different brand and what's load-bearing on this specific creator/product.

There's also a creative grade — a top-line letter score plus a one-paragraph "why this won" — that's deliberately hard to game. It's calibrated against a reference set of ads we've graded by hand, so an A+ from the model means roughly what an A+ from a senior strategist would mean.

What the report is not doing: it's not telling you to copy the ad. It's telling you which mechanics are doing the lifting, so you can apply them to your own creative without cargo-culting the surface.

Doing this cheaply enough to run on every ad

Every cost step you remove from the pipeline you get to spend on quality somewhere else.

When we killed frame extraction and transcription, we deleted three subtotals from the bill: vision tokens for 30+ frames, Whisper compute, and a long synthesis prompt that had to compensate for both. What's left is one inference call with a video input and a structured output.

That call isn't free, but it's now bounded. A 30-second creative is a known size. We know roughly what a report costs to produce, we know it produces consistent output, and we can run it on every ad a user uploads without having a budget conversation each time. That's the unlock — not just better analysis, but analysis at the throughput of someone's actual research workflow.

What's still hard

Three things, honestly:

Long-form video. A 30-second ad is fine. A two-minute YouTube pre-roll or a long-form TikTok is harder — sending a video that big inline eats your request budget fast. We've designed but not yet shipped a streaming-upload variant of the same pipeline that lifts that ceiling. That'll land in the next few weeks.

Distinguishing performance from production value. Sometimes an ad wins because the creator is exceptional, and the script underneath is ordinary. The report's job is to tease those apart — to tell you which 70% is replicable and which 30% is "you'd need that specific person." We're better at this than the frame-pipeline ever was, but it's the dimension where strategist-quality analysis is still meaningfully ahead of model-quality analysis.

Knowing what didn't matter. The hardest critique is "the ad would have worked without this." Models are good at describing what's there. They're worse at confidently saying a beat was load-bearing vs. ornamental. We compensate with structured prompting and a calibration set, but it's an open problem.

What this becomes downstream

The analysis report isn't the destination — it's the input to a generator. Once we know precisely why an ad works, we can write a UGC replication script that preserves the winning mechanics without copying the surface. Then we can hand that script to an AI video generator and produce a finished creative for your brand, your product, your character. Same workflow. One pasted URL away from a finished video ad.

But that's the next dispatch.

If you want to see the analysis report on an ad you're actually studying, the CTA below will take you straight to it — it's the fastest way to understand what 90 seconds of structured output looks like vs. a Notion page of vibes.